Object detection using GPU on Windows is about 5 times slower than on Ubuntu · Issue #1942 · tensorflow/models · GitHub

TensorFlow Performance with 1-4 GPUs -- RTX Titan, 2080Ti, 2080, 2070, GTX 1660Ti, 1070, 1080Ti, and Titan V

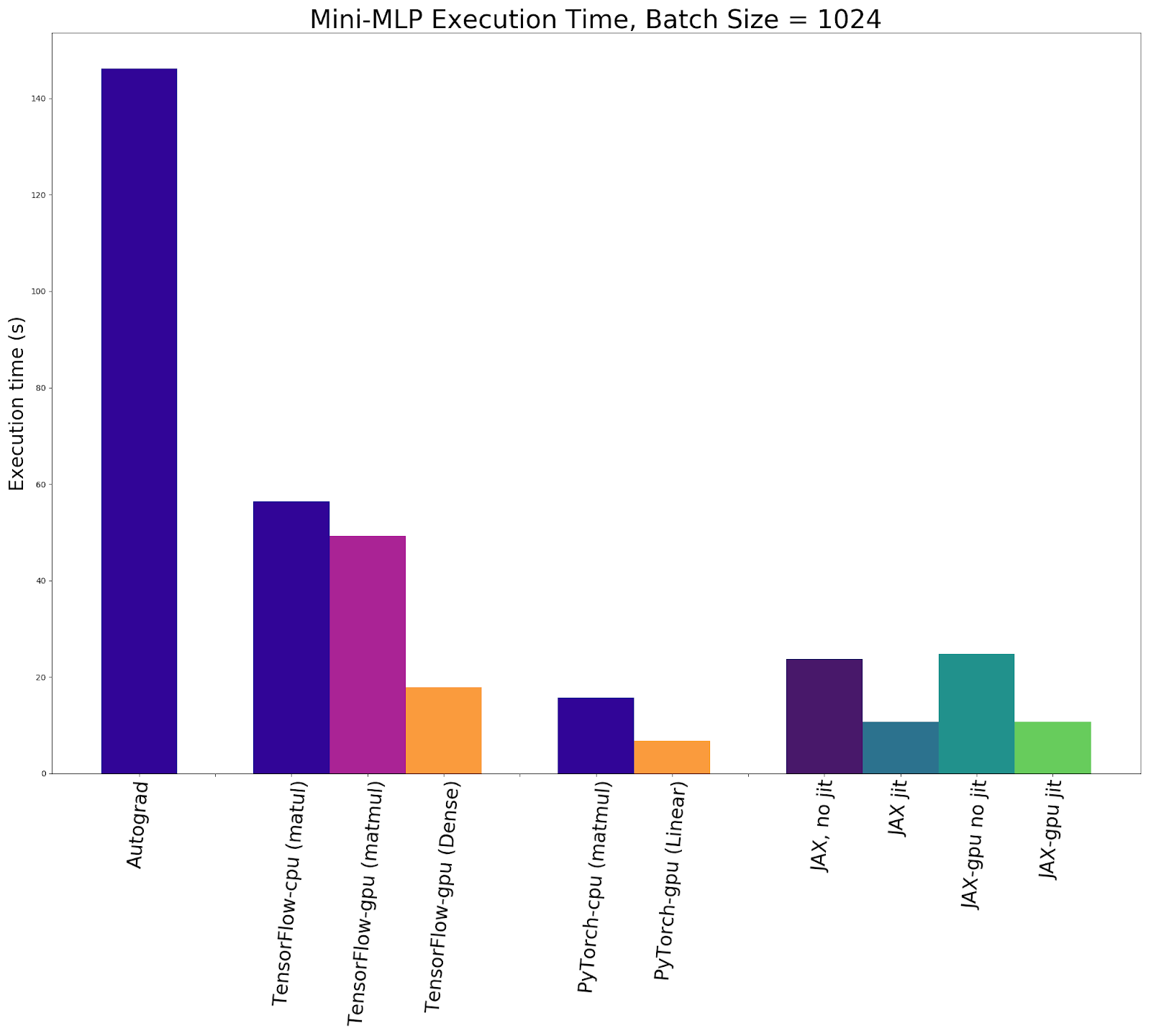

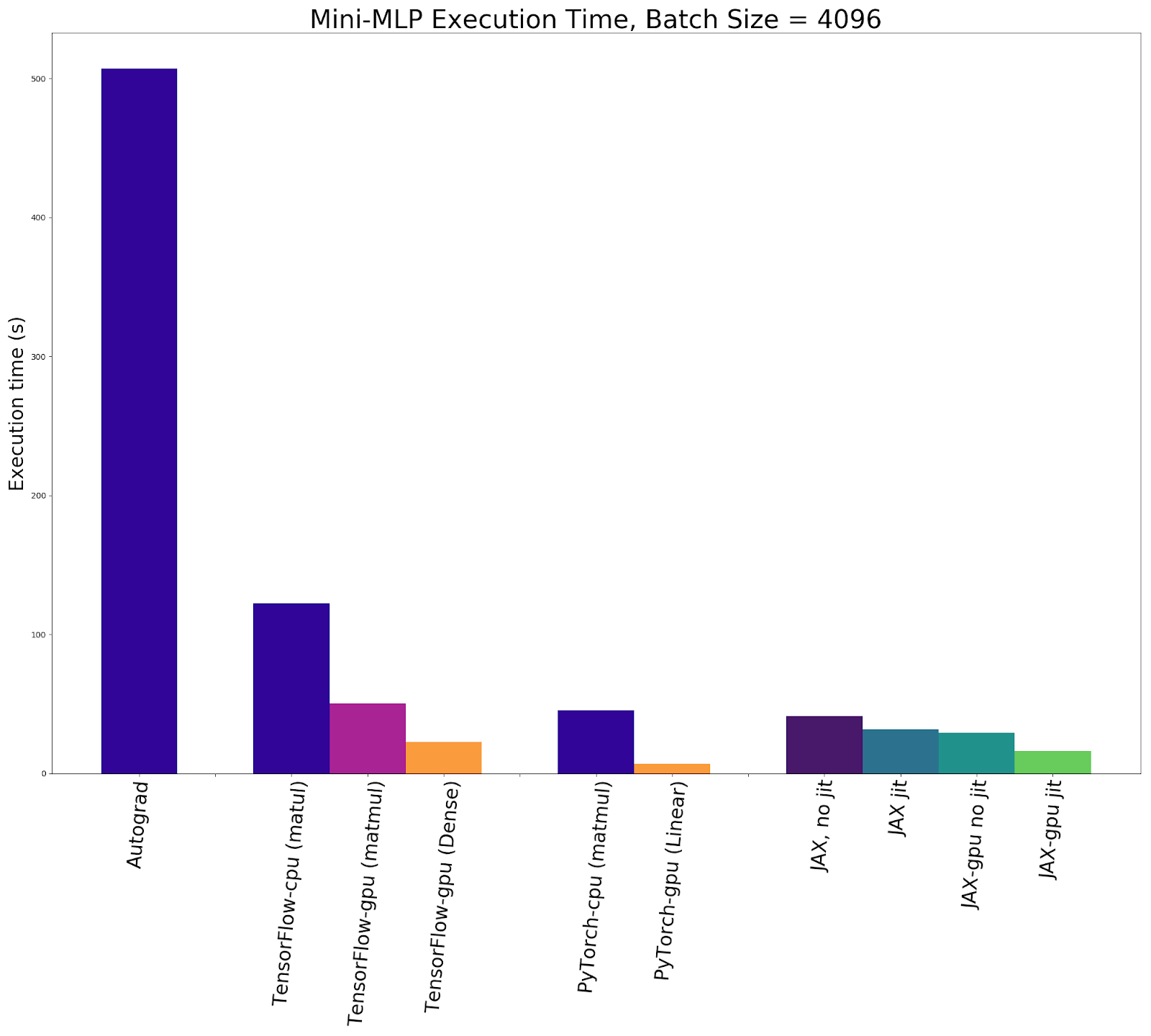

Accelerated Automatic Differentiation with JAX: How Does it Stack Up Against Autograd, TensorFlow, and PyTorch? | Exxact Blog

Accelerated Automatic Differentiation with JAX: How Does it Stack Up Against Autograd, TensorFlow, and PyTorch? | Exxact Blog